Alex Constantine - January 11, 2014

January 10, 2014

Nearly 50 years ago, Gordon Moore suggested that the number of transistors that could be placed on a silicon chip would continue to double at regular intervals for the foreseeable future. Known as Moore’s law, the truth of that observation has made computers cheap and ubiquitous. Cellphones are so inexpensive there are now more than six billion of them—almost one for every person on the planet.

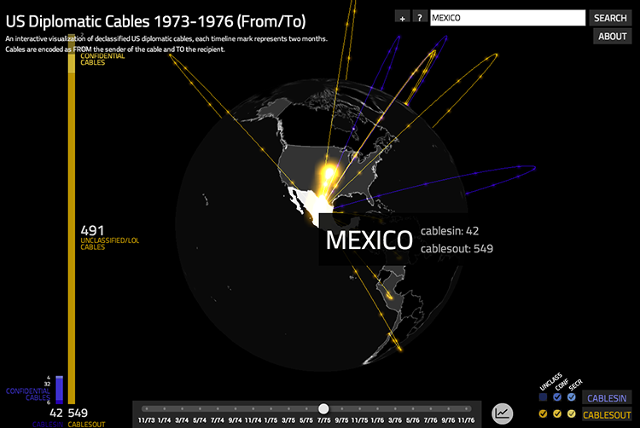

Moore’s Law has also made mass automated surveillance dirt cheap. Government surveillance that used to cost millions of dollars can now be carried out for a fraction of that.

We have yet to fully grasp the implications of cheap surveillance. The only thing that is certain is that we will be seeing a great deal more surveillance—of ordinary citizens, potential terrorists, and heads of state—and that it will have major consequences.

In the past, surveillance was labor intensive. Twice as much surveillance required twice as many people and cost twice as much. But when surveillance became automated, its cost declined exponentially.

To understand the economics of surveillance, it is worth looking more closely at Moore’s Law.

In 1965 Gordon Moore observed that the number of transistors on a single chip had doubled every year since the invention of the integrated circuit in 1958. Since that time, his Law has been modified. The increase in transistor count has slowed to around 40 percent per year. A number of similar predictions have been made about exponential rates of increase in network capacity, pixels, and magnetic storage. Many of those predictions have proven true.

These technologies are the building blocks for surveillance systems. If you combine a number of technologies that are improving at the rate of 40 percent a year in a system, you can end up with systems whose performance is increasing even faster. Consider computer systems.

Computers combine integrated circuit technology, semiconductor storage, magnetic storage, and network performance into a single system. As a result in the 1990s, while the transistor counts were increasing at a 40 percent rate,system processing power was growing at an 80 percent rate.

Something growing at the rate of 80 percent a year increases by a factor of more than 300 in ten years. If the capability of surveillance systems were to increase at this rate, in ten years a dollars’ worth of today’s surveillance could be bought for fractions of a penny. Applications that were not feasible at a dollar suddenly are practical. These types of advances made the NSA collection of metadata feasible.

And if the capability of surveillance systems continues to increase at this rate, technologies that, say, identify people’s faces when they enter a store or board a plane are suddenly practical.

To my mind, there are two broad classes of automated surveillance— participatory and involuntary, and the line that separates them is fuzzy. Participatory surveillance arrived with the widespread use of the Internet. During this period users were actively involved in exposing their information over the Internet when they provided personal information in the course of purchasing products, searching for information, or interacting on social networking sites.

People were voluntary participants in the surveillance process even if they did not fully understand its implications. When they granted companies the right to use their information, they got services of great value in return.

Consciously or not, users were monetizing their privacy. That is, they traded information about themselves and access in virtual space and got free services in exchange. Amazon captured customer information and in return provided better selection and service, like one click shopping.

Google, founded in 1998, provided valuable free search in return for serving up targeted ads to users. Facebook provided communities, timelines, and “walls” for people wanting to network. Facebook users—now numbering more than a billion—received these services free in return for allowing Facebook to use their information.

Involuntary surveillance on a large scale—driven by Moore’s Law—arrived shortly thereafter. Its primary instruments are cellphones, smartphones, GPS, and inexpensive cameras. When these devices are employed, there is no need for users to be actively involved in creating information about their activities. They get little or nothing in return for involuntarily providing valuable information about themselves. The NSA does not provide services of any kind to cell-phone users in return for their metadata.

Nobody knows how quickly the cost of mass surveillance is declining or at what rate it is growing. What we do know is that existing participatory and involuntary surveillance technologies are proliferating and new ones are being introduced and becoming more effective every day. As costs drop, new frontiers in surveillance open up. Low-cost facial recognition will let the government and retail establishments track us with our cellphones turned off and our loyalty cards left behind.

As the cost of automated surveillance continues to drop, there will be a rapid increase in surveillance applications. Disparate pieces of our personal puzzle will be brought together in monstrously large databases. Big data analysis tools will combine the bits and pieces to create a full picture of who we are, where we go, what we read and watch, what we do, and what we like. There will be files of facts about us such as our addresses, phone numbers, the calls we placed on our cellphones and where we were when we placed them, and the Internet sites we visited. But there will also be algorithmic predictions about our tastes, behavior, plans, opinions, thoughts, and health. Almost everything about us will be known or predicted. Those predictions may well become the self-fulfilling prophecies that determine our future.

While much of the world’s concern has been focused on NSA spying, I believe the greatest threat to my freedom will result from my being placed in a virtual algorithmic prison. Those algorithmic predictions could land me on no-fly lists or target me for government audits. They could be used to deny me loans and credit, screen my job applications and scan LinkedIn to determine my suitability for a job. They could be used by potential employers to get a picture of my health. They could predict whether I will commit a crime or am likely to use addictive substances, and determine my eligibility for automobile and life insurance. They could be used by retirement communities to determine if I will be a profitable resident, and employed by colleges as part of the admissions process.

Especially disturbing is the notion that once you become an algorithmic prisoner, it is very difficult to get pardoned. Ask anyone who has tried to get off a no-fly list or correct a mistake on a credit report.

Businesses are supporters of both participatory and involuntary surveillance. They want to use surveillance intelligence to market to customers, identify the most desirable ones, and employ location-based marketing. Involuntary surveillance is extremely appealing to government agencies that want to make our airports safe and protect us from crime and terror attacks. The average Internet user seems unconcerned about participatory surveillance. He is prepared to give up his privacy to get valuable service for free. As a result there is little or no organized resistance to automated mass surveillance.

My advice: Live your life with your eyes wide open, because Moore’s Law of Mass Surveillance is here to stay.

http://www.nextgov.com/cybersecurity/2014/01/great-computing-power-comes-great-surveillance/76607/