Alex Constantine - December 8, 2007

Oct 15th 2007 7:47PM

Oct 15th 2007 7:47PM

Aimee Weber

The Biomedical Engineering Laboratory at Keio University ... recently announced that they were able to control a Second Life avatar using an electrode-filled headset that monitors the motor cortex and translates the data into control inputs for a Second Life avatar. You can see this technology in action in this video.

So how would this all work and what would it mean? ...

Most BCI experiments have come from invasive implants that target specific areas of the brain with better signal resolution. Not surprisingly, asking the user base of a virtual world to accept a brain implant poses some difficult marketing challenges.

Fortunately, using the electroencephalogram (commonly known as the EEG) as a non-invasive method of getting brain inputs may eventually create a marketable input device for the masses, albeit with some challenges, for example the BCI using an EEG requires training

[Note- Please see the first chapter of Psychic Dictatorship in the USA, by me, for more on EEG as the basis of control of the brain - A. Constantine].

An interesting thing about newborn infants is that they're not born with complete control over their motor functions, but rather they go through a slow process of motor development. Initially their brains will burst with semi-random thoughts that result in the child's body parts reacting in a similarly semi-random way. Eventually the child will build a core of positive and negative experiences that encourage it to move in a more meaningful way. For example, grabbing the bottle means yummy food is coming while wildly flailing a limb may mean pain is coming when it hits the side of the crib. This process of self-discovery starts with the torso and neck with gross motor functions and slowly works its way outward to the extremities along with fine motor control such as articulating your fingers.

This whole process basically boils down to learning what kinds of brain impulses result in what kinds of physical-world results. It's interesting to note that this process of self-discovery was even simulated by a spider-like robot created by Hod Lipson which learned about its own robotic limbs and learned how to use them to move. This fascinating experiment was demonstrated at TED this March and is well worth a watch.

So if you were to strap on your mind-reading headpiece and fire up your Second Life enabled electroencephalogram, you would likely begin by flailing about in the virtual world like a newborn infant. After a great deal of practice syncing up your brain activity with your avatar's motion, you will begin to develop extremely crude gross motor skills such as moving forward and back, side to side, stopping, and asking complete strangers for spare Linden dollars. (Kidding!)

But eventually every precious angelic newborn becomes a teen, gets lots of body piercings, and becomes a ripper that does diamond kickflips on the half-pipe. I don't know what any of that skateboarding lingo means, but it sounds like it takes a supreme level of motor control to achieve, the kind one gets after years of practice. Well, when the novelty phase of using an EEG to control a virtual avatar ends, the technology becomes more sensitive, and people spend hours, days, months, or even years refining their virtual mind-control skills ... we may not have to settle for GROSS motor control.

At this time the state of Second Life avatar motion is abysmal (no fault of Linden Lab's.) Avatar motion simply consists of playing pre-scripted animations painstakingly created in Poser or recorded by a human subject from a motion capture device (mocap.) This allows avatars to exhibit greater bodily expression than ridged models but falls far short of the real-time physical reactions we take for granted in the real world. Ideally, avatars should be skeletal ragdolls (http://en.wikipedia.org/wiki/Ragdoll_physics) that not only react to forces imparted upon them, but also respond to simulated muscle inputs by the user. According to the Second Life wiki, "Puppeteering" is indeed in the works but the fact is that a keyboard and mouse are a woefully inadequate method for inputting the multitude of subtle realistic human bodily controls in real-time.

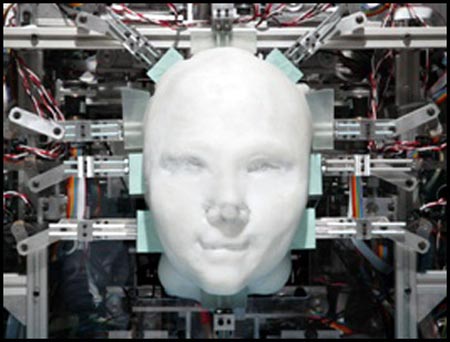

But if the avatar has a direct feed into the motor cortex, an experienced user may actually one day be able to control more than just forward and back. They might be able to cause a ragdoll avatar to wave, hug another resident, wiggle their fingers, or perform complex and beautiful interpretive dance motions complete with facial expressions showing love, fear, doubt, and passion ... all simply by thinking about it.

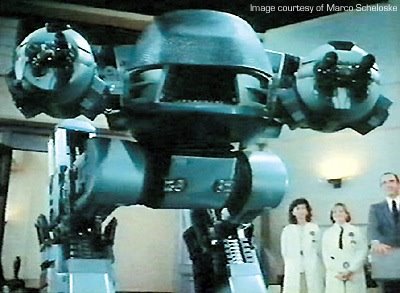

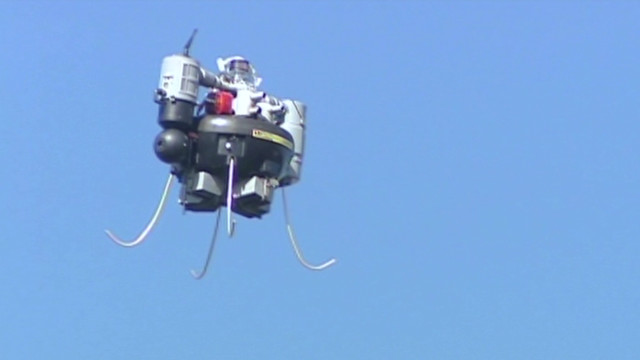

Of course this technology goes beyond Second Life. It could be used to remotely control anthropomorphic robots (actually, nothing says they have to be anthropomorphic!) that can be used to perform tasks that are dangerous or hazardous for humans. The physically impaired such as Stephen Hawkins could enjoy new degrees of freedom, not just exploring virtual worlds but in the real world using mind-controlled robotic assist. And as long as I'm drunk with optimism, perhaps in the very long term all humans will be outfitted with devices that make our fleeting desires a reality (Coq au vin for dinner! I was just thinking I was in the mood for a nice coq au vin!)

When enabling the human brain to input data directly into a computer becomes a mature, well established science, the next challenge will take us in the other direction ... feeding computer information directly into our minds. Sure, we may be able to make our avatars hug a friend in the virtual world, but when will we feel the warmth of their loving embrace? ...

http://www.secondlifeinsider.com/2007/10/15/second-life-avatars-controlled-by-the-human-brain/